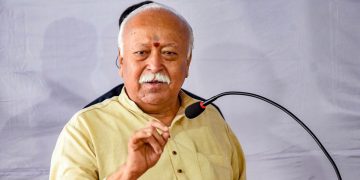

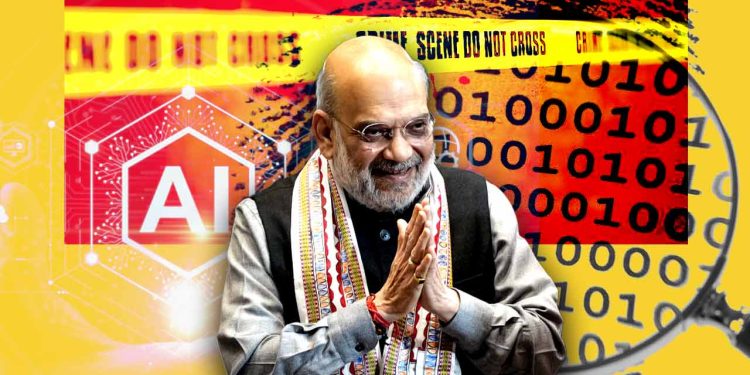

India has taken a significant step in its counterterrorism effort as Home Minister Amit Shah authorised the rollout of a new artificial intelligence system, colloquially dubbed ‘Gandeev’, to central and state investigative units such as the National Investigation Agency (NIA) and Anti-Terrorism Squads (ATS). Ministers described the platform as an advanced operational tool that will assist agencies in identifying, analysing and disrupting terror networks faster than before.

How India AI counterterrorism Gandeev works

The Gandeev system combines machine learning algorithms with large datasets to spot patterns and anomalies that might elude traditional investigative methods. Key components include automated image and voice recognition, real-time social media monitoring, link analysis to map associations between suspects, and geospatial tools to track movements and hotspots. Analysts can feed case files into the platform and receive ranked leads, probable connections and suggested avenues for follow-up.

Officials emphasise that Gandeev is an investigative aid rather than an autonomous decision-maker. Human analysts and investigators retain final authority over intelligence assessments and operational actions. The system is intended to reduce the time required to process large volumes of digital evidence, freeing investigators to focus on verification and fieldwork.

There are practical advantages in law enforcement scenarios where minutes matter. For example, rapid cross-referencing of voice samples or images from multiple sources can accelerate the identification of suspects. Geotemporal clustering can reveal the emergence of coordinated activity. By automating routine data parsing, Gandeev aims to improve case throughput and reduce backlogs in complex terrorism inquiries.

At the same time, political opponents have questioned the scope of the technology and the safeguards surrounding its deployment. Critics have warned against mission creep and potential infringement on civil liberties if oversight and legal safeguards are not robust. Government spokespeople responded by pointing to proposed audit mechanisms, role-based access controls, and judicial authorisation for intrusive queries as part of the framework for deployment.

Legal experts note that technology alone cannot solve structural challenges in intelligence work. Accurate results depend on the quality of training data, transparency about algorithmic behaviour, and continuous auditing to prevent bias. The administration has said it will engage independent auditors and privacy advisers to validate the platform’s performance and compliance with statutory safeguards.

Operationally, Gandeev will be introduced in phases across state and central units, starting with pilot projects in select agencies. Training programmes are planned to familiarise investigators with the system’s outputs and limits. Authorities say the phased approach will allow technical adjustments and policy refinements before fuller adoption.

For a nation facing evolving asymmetric threats, combining advanced analytics with traditional investigative craft is a familiar strategy. The challenge will be ensuring that enhanced capability is matched by legal clarity, transparent oversight and clear accountability, so public confidence grows as the technology is used. Whether Gandeev measurably improves prosecutions and prevents attacks will be the decisive test for both policymakers and the agencies entrusted with its operation.

Key Takeaways:

- India AI counterterrorism Gandeev is being deployed to bolster NIA and ATS capabilities against organised terror threats.

- The system uses machine learning for pattern detection, voice and image analysis, and geospatial intelligence to speed investigations.

- The government says safeguards and human oversight will govern use, while opposition parties have raised privacy and misuse concerns.